The EU AI Omnibus Deal: What CTOs and CCOs Should Actually Do With the Extra Time

The EU AI Act high risk deadline moved to December 2027. Here is how CTOs and CCOs should use the runway, what bans tightened, and why ISO 42001 is the practical play.

5/14/20264 min read

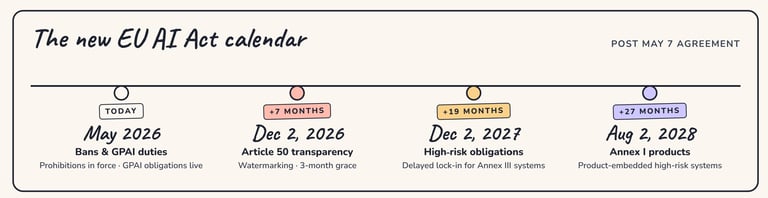

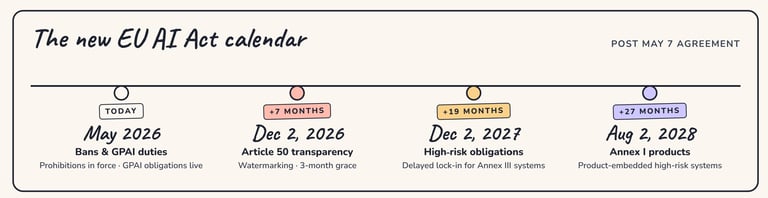

At 4:30 a.m. Brussels time on May 7, 2026, EU legislators closed a deal that quietly reshaped the AI compliance calendar for every enterprise with European customers, employees, or supply chain exposure. The agreement on the AI Omnibus, the regulation amending the EU AI Act, pushed the application date for high risk AI obligations to December 2, 2027. Annex I product embedded systems get an even longer runway, until August 2, 2028.

Then the celebration emails started landing in inboxes. "We have more time." "AI compliance can move down the roadmap." "Push to FY28."

Anyone reading the deal carefully knows that response is wrong. The Omnibus is not a pause. It is a runway, and a short one. Here is what actually changed, and what your team should do this quarter.

"The extra time is real. The bans got broader, the watermarking deadline got tighter, and enforcement got more centralized. The CTOs and CCOs who win this window will not treat it as a pause."

What the Omnibus actually changed

Four substantive shifts matter for governance teams.

First, the high risk obligation delay is real. Annex III systems, which cover employment, biometrics, critical infrastructure, education, law enforcement, justice, migration, and essential services, now apply from December 2, 2027. Annex I systems embedded as safety components in regulated products apply from August 2, 2028. The deal acknowledges that AI Act compliance requires significant preparation and the introduction of supporting technical standards. Brussels is buying time for the standards bodies as much as for industry.

Second, the prohibited use list got broader. The Omnibus explicitly bans AI systems used to generate non consensual intimate imagery (NCII) and child sexual abuse material (CSAM), including so called nudifier apps. Crucially, the ban applies to both providers and deployers. If your product line includes image or video generation, your prohibited use policy and your customer terms need a refresh now, not after standards are published.

Third, the watermarking deadline moved closer. Article 50 transparency obligations for synthetic content now have a 3 month grace period, down from 6, with the new effective date set to December 2, 2026. That is a 2026 deadline, not a 2027 one. Most companies have not started this work.

Fourth, enforcement was centralized. The AI Office now directly supervises systems built on the provider's own general purpose model, with national regulators retaining authority for law enforcement, border management, judicial systems, and financial supervision. The result is fewer ambiguous reporting lines and faster, more uniform enforcement across the bloc. Know which regulator owns your file before they introduce themselves.

How to actually use the runway

The runway is 18 months for high risk systems. That is roughly the time an honest, audited AI management system implementation takes. Wasting it means hitting the wall in late 2027 with a thinner talent market and an angrier regulator.

Five priorities for the next two quarters:

Inventory every AI system in production, in pilot, and in the shadow AI tier. A board ready answer to the question "how many AI systems do we run, and who owns each one" is now table stakes. The Microsoft Agent 365 launch on May 1 and the Alation AI Governance launch on May 11 both confirm that the vendor market expects you to have one.

Pick an anchor framework and commit. ISO 42001 has emerged as the most pragmatic choice: it already maps to roughly 70% of EU AI Act high risk documentation requirements, and roughly 40% of EU enterprise AI RFPs are now asking for it. The NIST AI RMF and its forthcoming Cyber AI Profile complement it well for US operations.

Build the watermarking and synthetic content disclosures now. December 2, 2026 is the real near term deadline. Engineering needs lead time. Procurement and product marketing both have a stake.

Reset your prohibited use policy. The new NCII and CSAM bans require explicit policy language, contractual flow down to customers, and detection controls. Most policies written in 2024 do not address either.

Map your regulator and your enforcement path. The AI Office now owns more direct supervision. National regulators still matter for certain sectors. Know who calls first.

The strategic read

The Omnibus deal is the EU acknowledging that the AI Act, as written, was outrunning the technical standards needed to comply with it. The fix bought time, but it also signaled that enforcement is coming, that the prohibited use list will keep expanding, and that the AI Office intends to be a real regulator, not a paper one.

Compounding the picture, US state laws are not slowing down. Connecticut passed an AI online safety bill on May 1 with a private right of action component. Colorado's AI Act enforcement was stayed, but a replacement bill is moving through the legislature this week. California, New York, and Texas are all watching. The patchwork is real.

The companies that pull ahead in 2027 and 2028 will be the ones that treat May 2026 as the start of the implementation window, not the end of the urgency. The work is now: inventory, framework, policy, engineering for transparency, regulator mapping. Done well, it is also a release velocity unlock, not just a defensive line item.

Dynamic Comply helps CTOs and CCOs build AI governance programs that earn trust from regulators, customers, and boards alike. If you want a pragmatic, opinionated roadmap for your AI management system, anchored to ISO 42001 and built for the EU AI Act timeline, visit dynamiccomply.com to start the conversation.

Download the Infograph Here

Connect:

(571) 306-0036

© 2026. All rights reserved.